Context

When growth outpaces learning

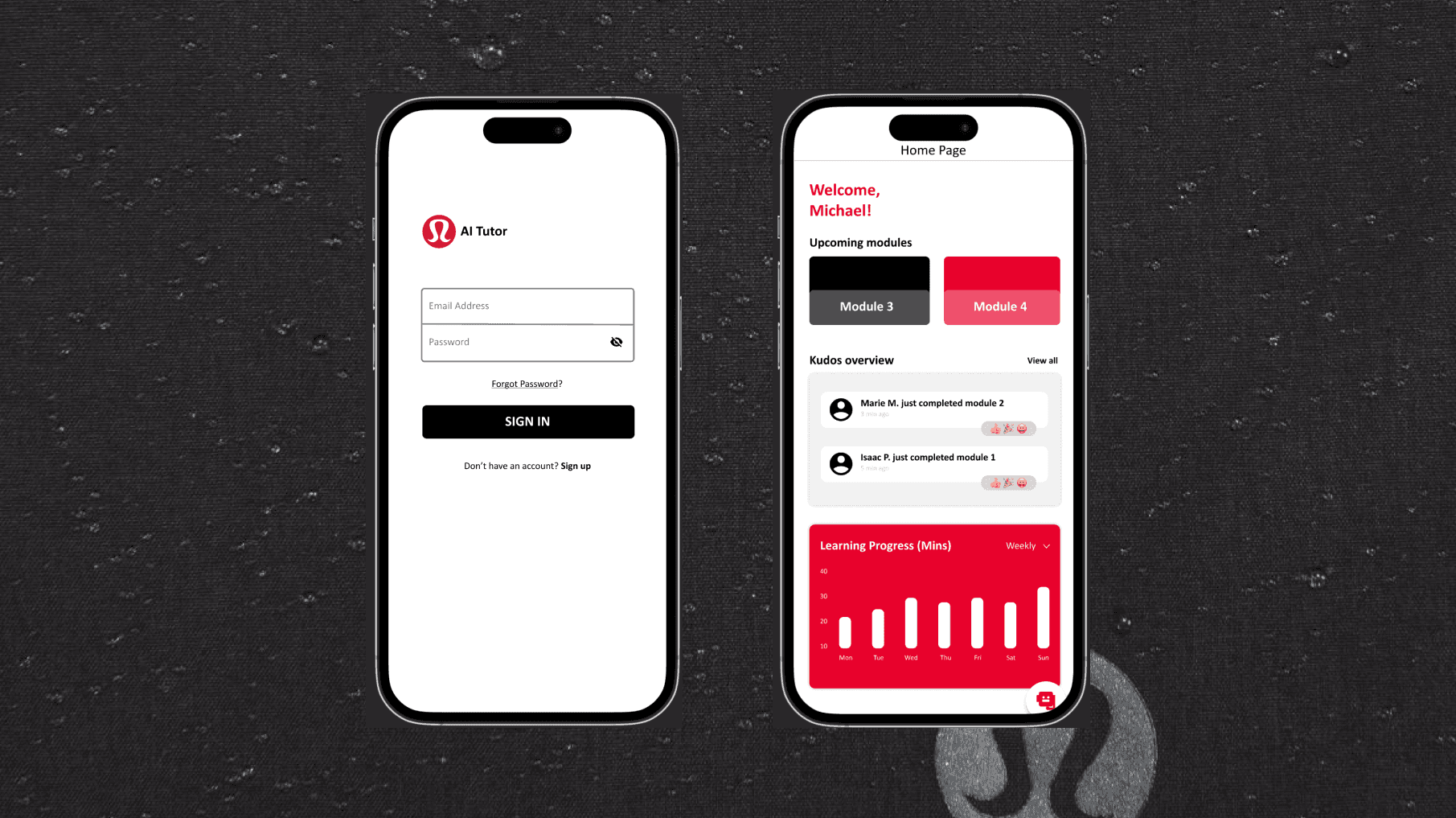

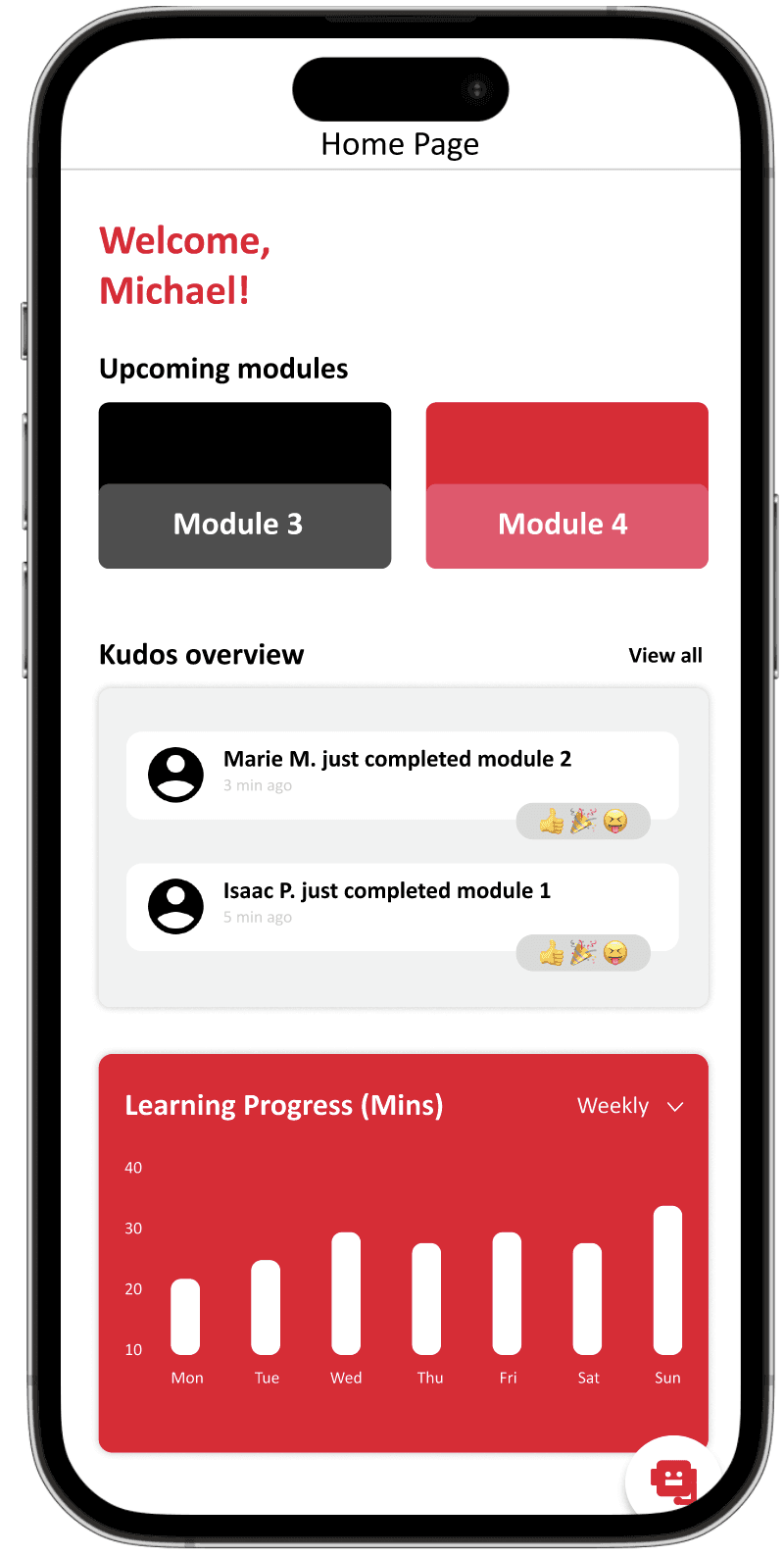

Lululemon educators are retail associates responsible for delivering personalized guest experiences. As the brand expanded across 700+ global stores, onboarding required associates to absorb more product knowledge in less time.

Two findings from interviews and observation sessions directly shaped the product direction.

The Real Problem

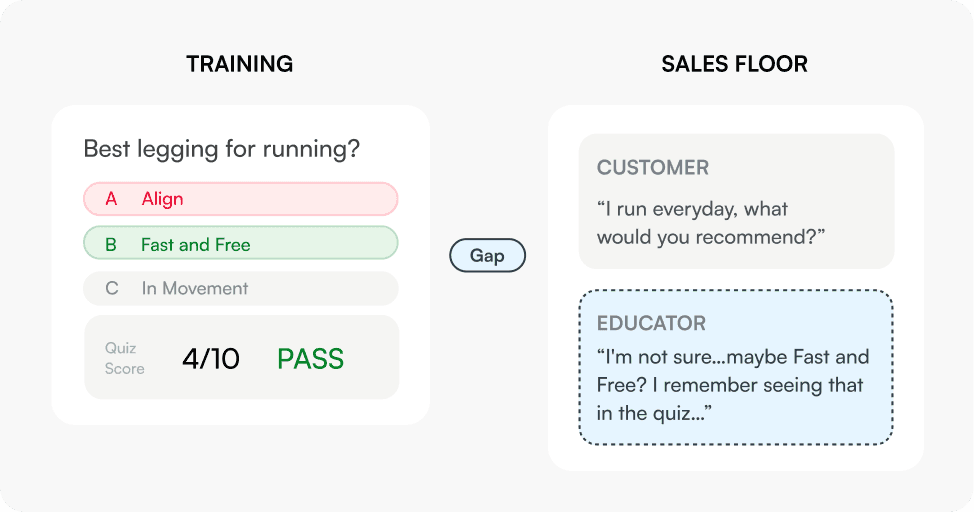

From better onboarding to better recall

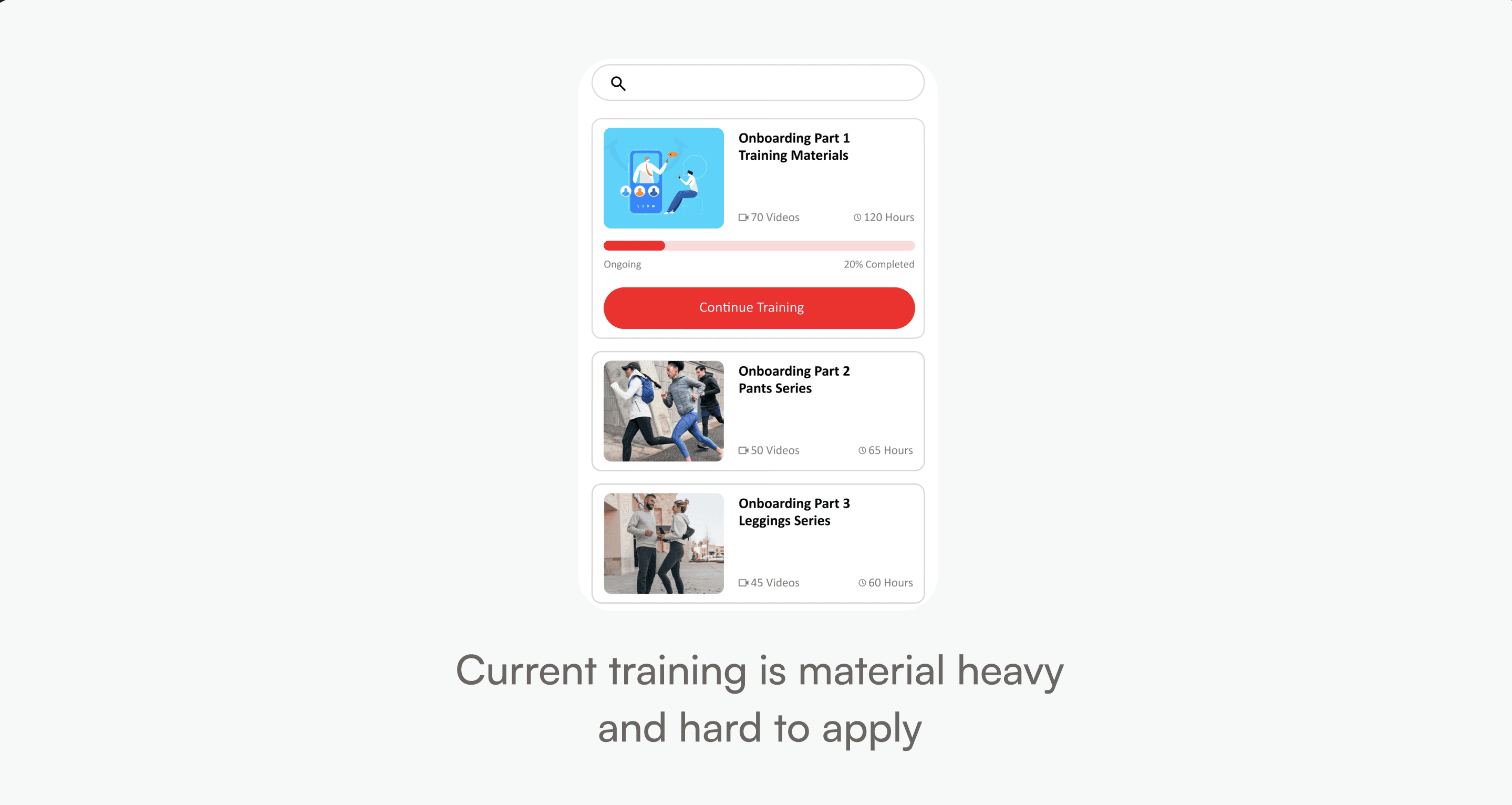

What it looked like

Lululemon needed a better onboarding tool.

What I actually had to solve

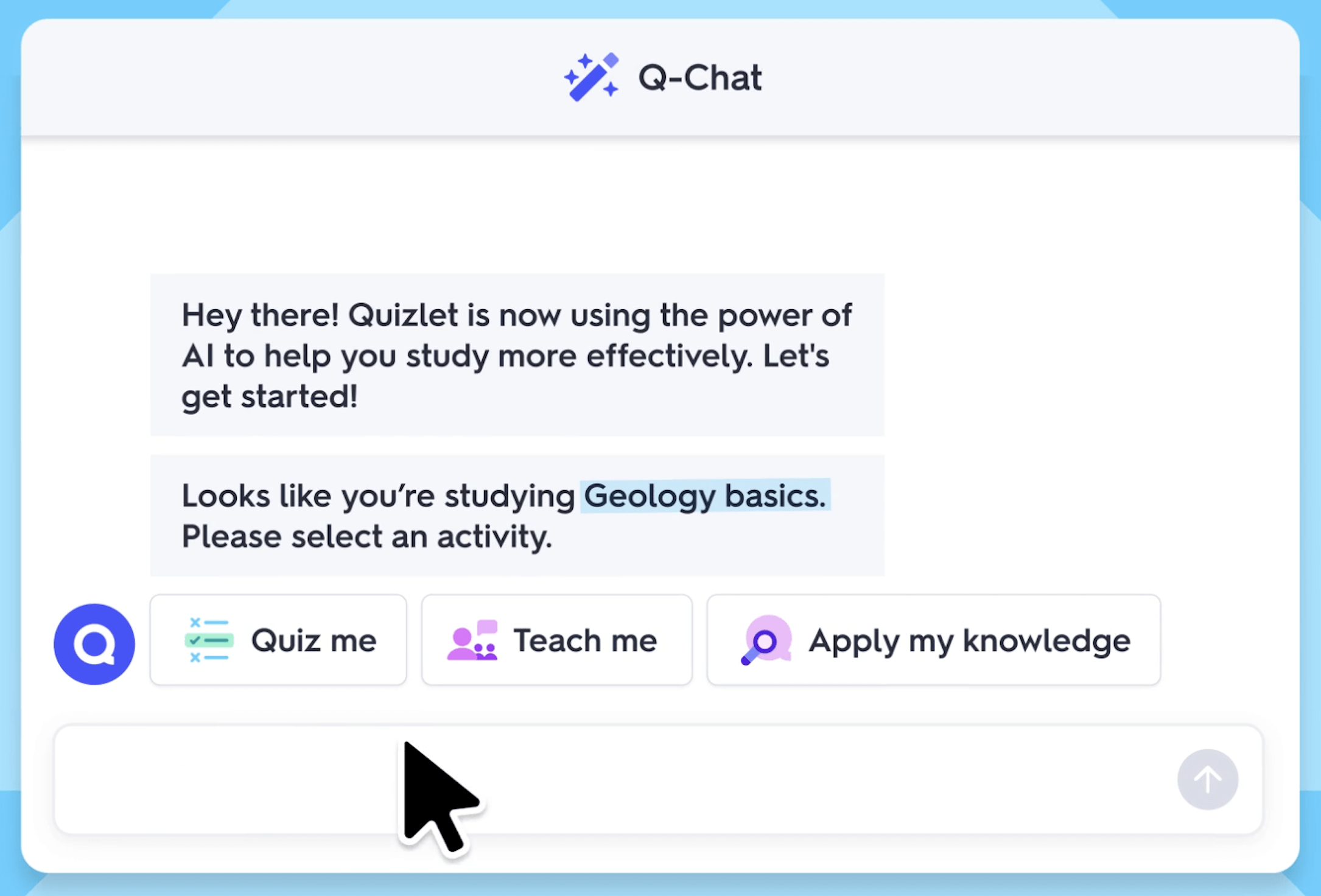

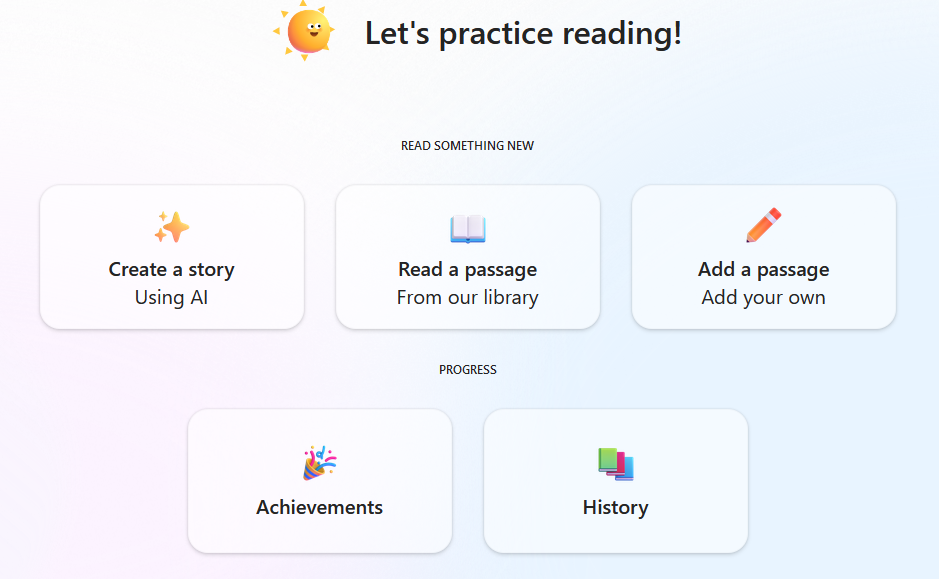

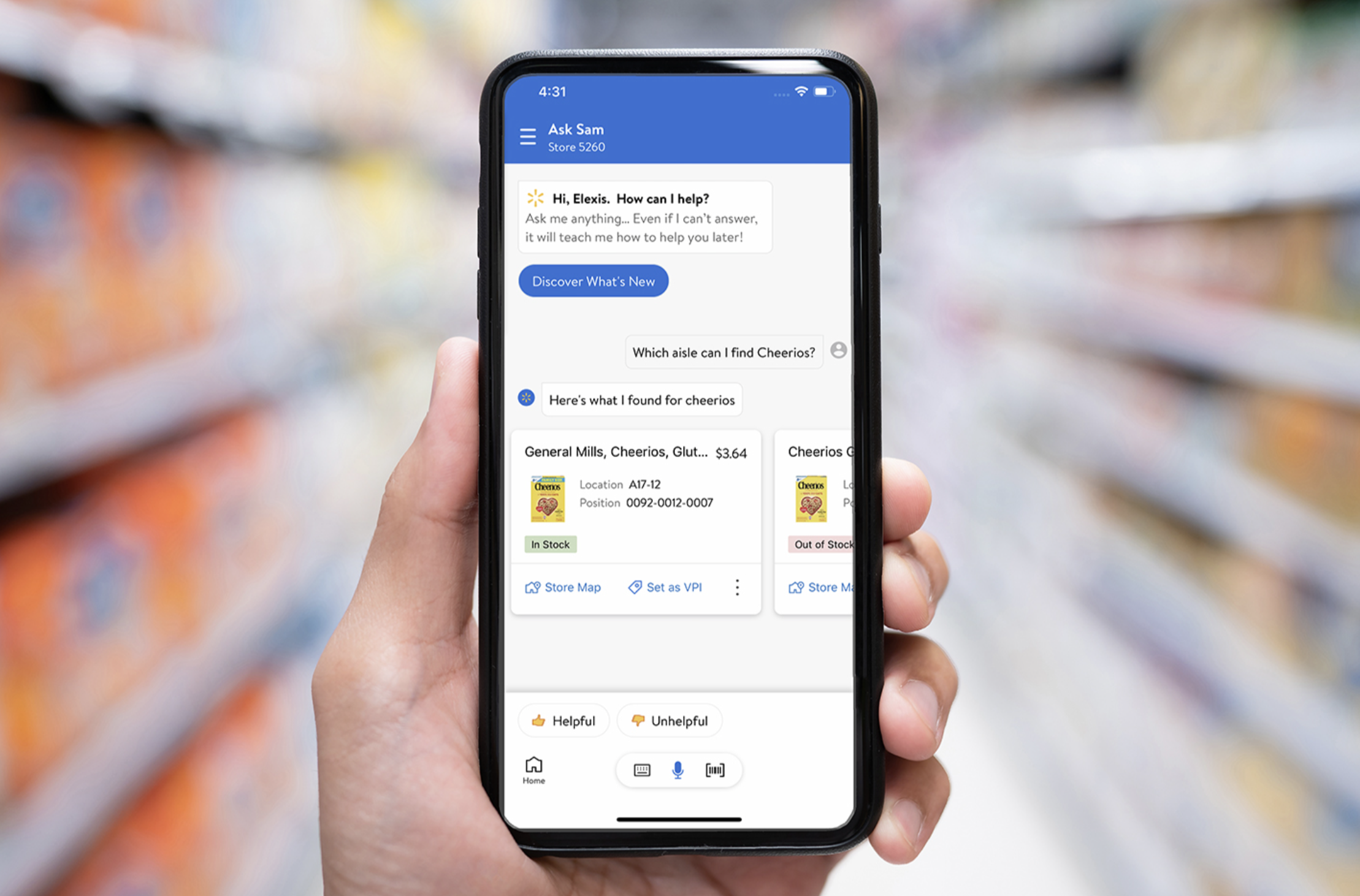

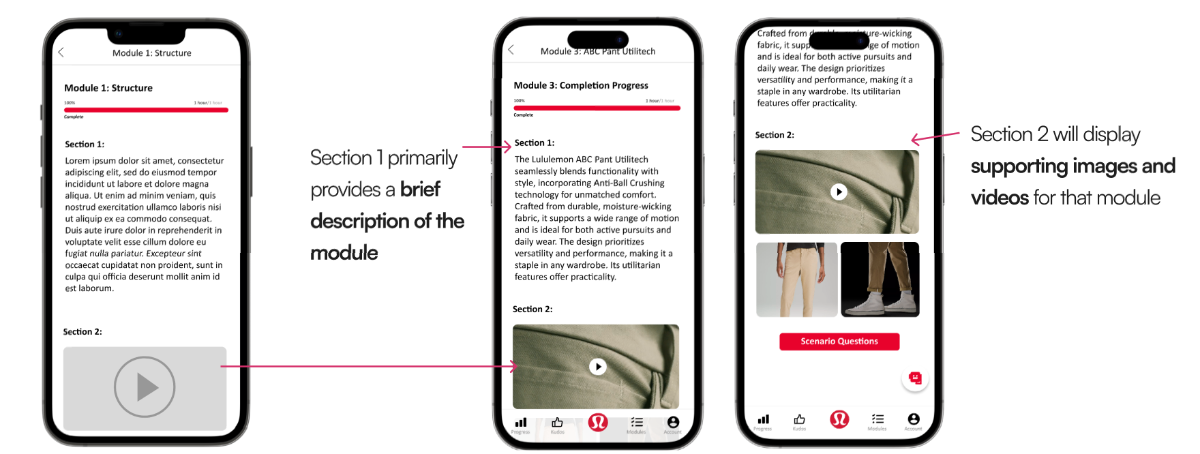

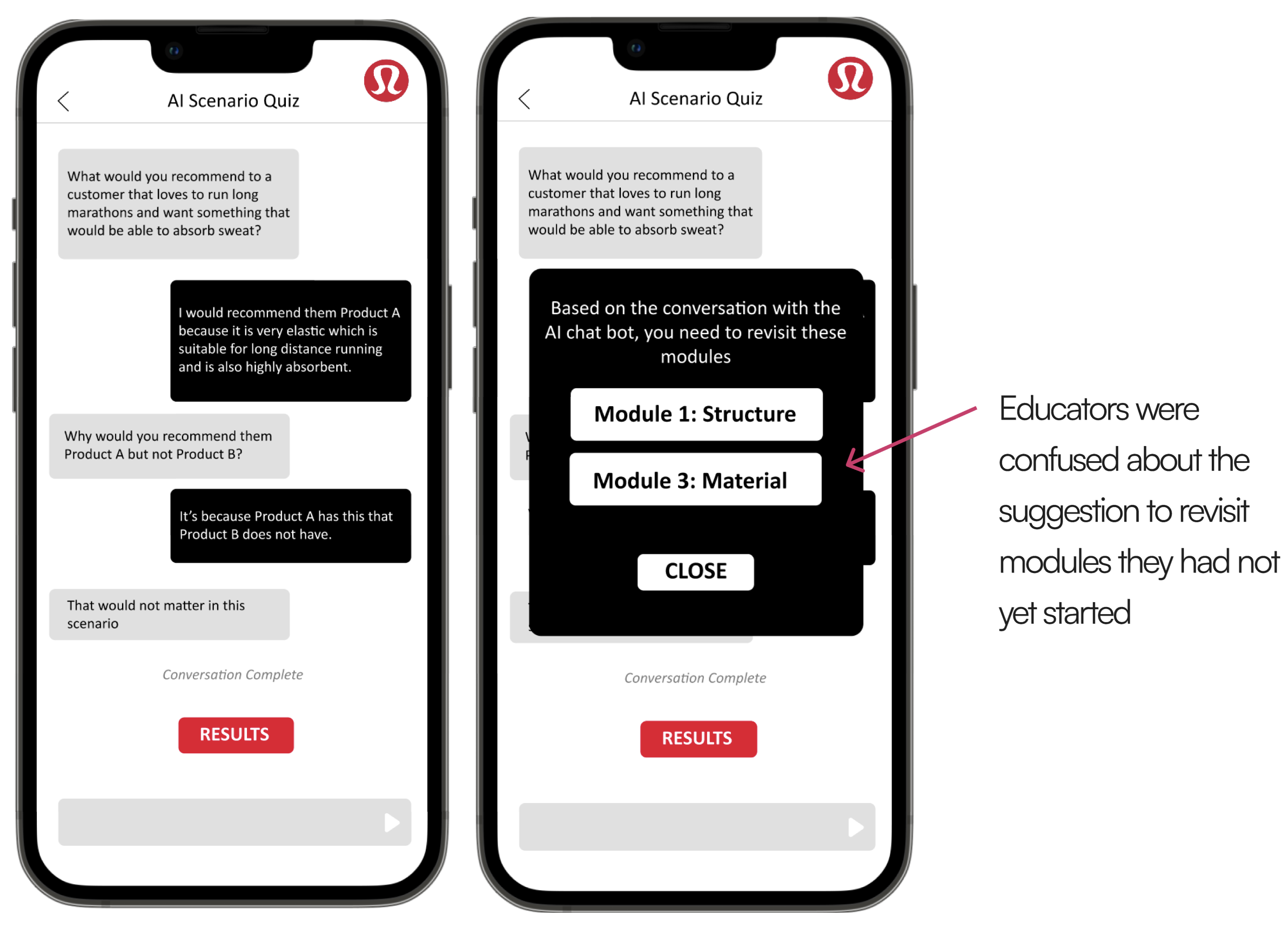

The real issue was not just content delivery. Educators needed to retain and apply product knowledge in realistic guest interactions, not just move through static modules.

A cleaner onboarding tool would not have solved the real problem. The real work was helping educators retain product knowledge and apply it confidently in real customer interactions.

Constraints

What shaped the direction

Time constraint

The product had to be designed, prototyped, and tested within a four-month capstone timeline, so we had to prioritize the highest-value learning flows.

AI feasibility

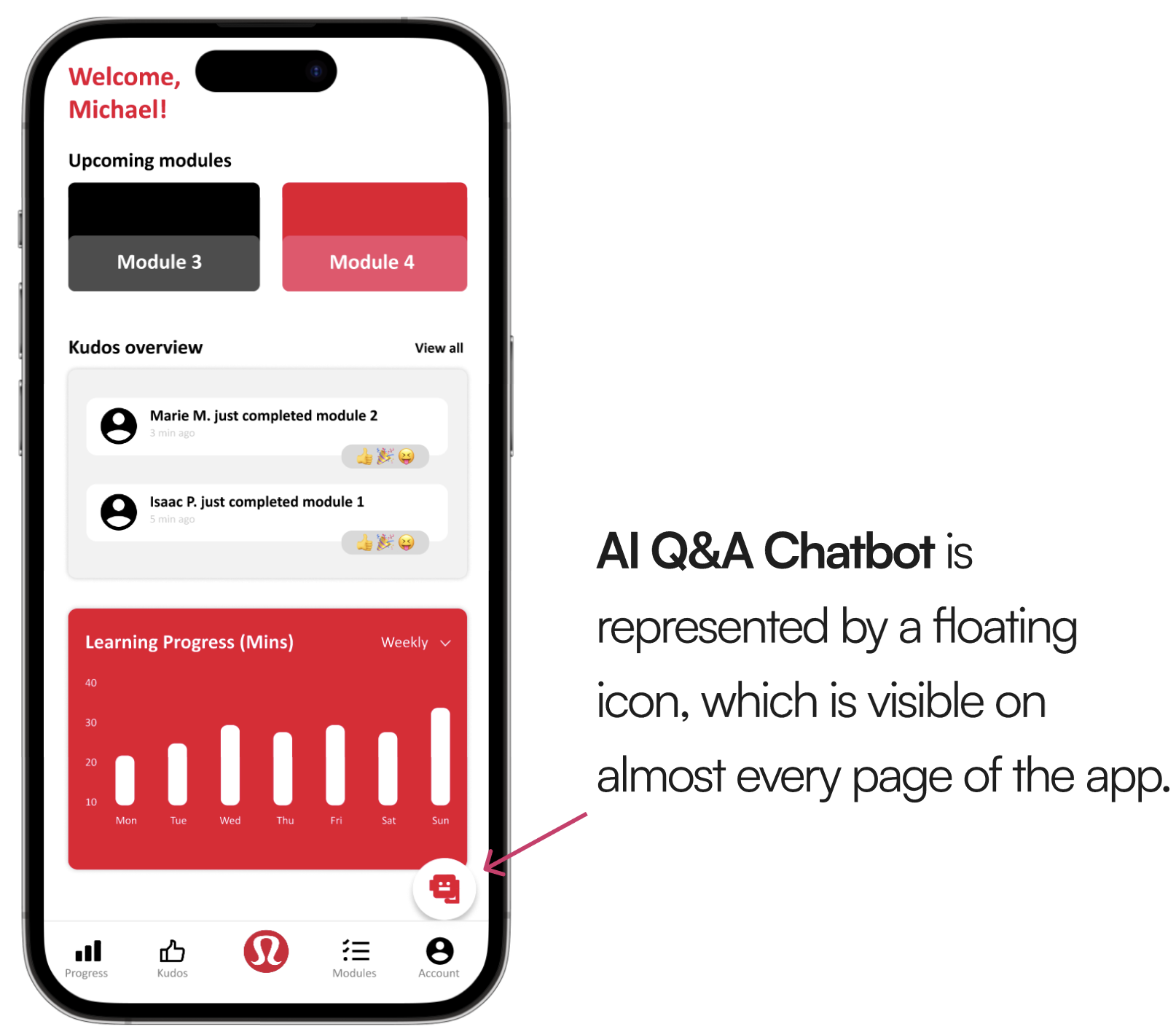

Real-time AI interactions had to remain lightweight and structured enough to feel useful on mobile without degrading performance.

Brand alignment

The interface needed to feel like part of Lululemon's internal ecosystem, not a separate experimental app. Familiarity was non-negotiable.

Tradeoff summary

These constraints pushed the product toward structured scenario-based learning rather than a fully open-ended AI tutor. A tighter scope that turned out to be the right call.